Eyeriss: An Energy-Efficient Reconfigurable Accelerator for Deep Convolutional Neural Networks IEEE ISSCC 2016

- Yu-Hsin Chen MIT

- Tushar Krishna MIT (now with Georgia Tech)

- Joel Emer MIT, NVIDIA

- Vivienne Sze MIT

Email: eyeriss at mit dot edu

Welcome to the Eyeriss Project website!

- A summary of all related papers can be found here. Other related websites and resources can be found here.

- Follow @eems_mit or subscribe to our mailing list for updates on the Eyeriss Project.

- To find out more about other on-going research in the Energy-Efficient Multimedia Systems (EEMS) group at MIT, please go here.

We will be giving a two day short course on Designing Efficient Deep Learning Systems on July 17-18, 2023 on MIT Campus (with a virtual option). To find out more, please visit MIT Professional Education.

Recent News

- 11/17/2022

Updated link to our book on Efficient Processing of Deep Neural Networks at here.

- 4/17/2020

Our book on Efficient Processing of Deep Neural Networks now available for pre-order at here.

- 12/09/2019

Video and slides of NeurIPS tutorial on Efficient Processing of Deep Neural Networks: from Algorithms to Hardware Architectures available here.

- 11/11/2019

We will be giving a two day short course on Designing Efficient Deep Learning Systems at MIT in Cambridge, MA on July 20-21, 2020. To find out more, please visit MIT Professional Education.

- 5/1/2019

Eyeriss is highlighted in MIT Technology Review. [ LINK ]

- 4/21/2019

Our paper on "Eyeriss v2: A Flexible Accelerator for Emerging Deep Neural Networks on Mobile Devices" has been accepted for publication in IEEE Journal on Emerging and Selected Topics in Circuits and Systems (JETCAS). [ paper PDF | earlier version arXiv ].

- All News

Abstract

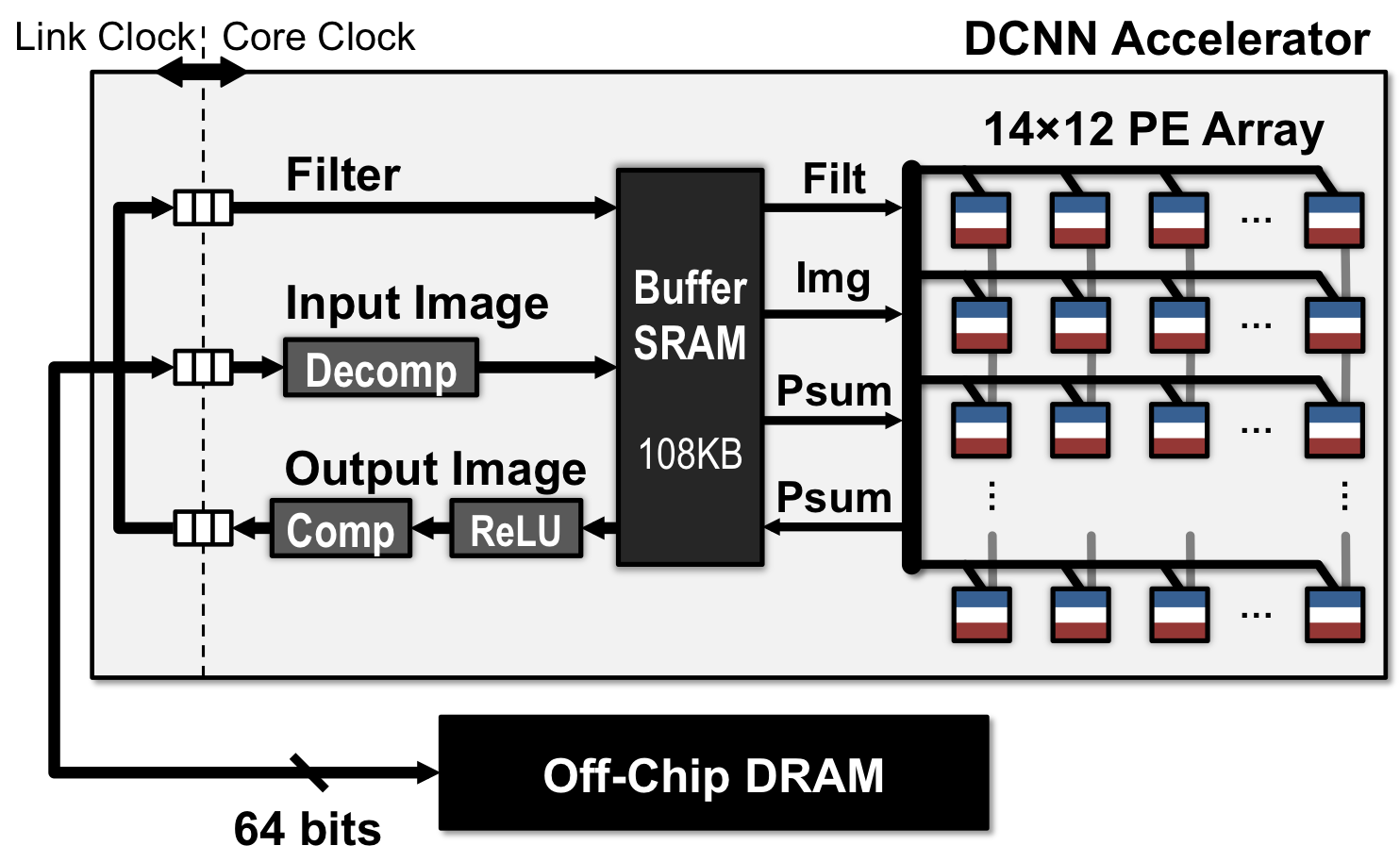

Eyeriss is an energy-efficient deep convolutional neural network (CNN) accelerator that supports state-of-the-art CNNs, which have many layers, millions of filter weights, and varying shapes (filter sizes, number of filters and channels). The test chip features a spatial array of 168 processing elements (PE) fed by a reconfigurable multicast on-chip network that handles many shapes and minimizes data movement by exploiting data reuse. Data gating and compression are used to reduce energy consumption. The chip has been fully integrated with the Caffe deep learning framework. The video below demonstrates a real-time 1000-class image classification task using pre-trained AlexNet that runs on our Eyeriss Caffe system. The chip can run the convolutions in AlexNet at 35 fps with 278 mW power consumption, which is 10 times more energy efficient than mobile GPUs.

Eyeriss Architecture

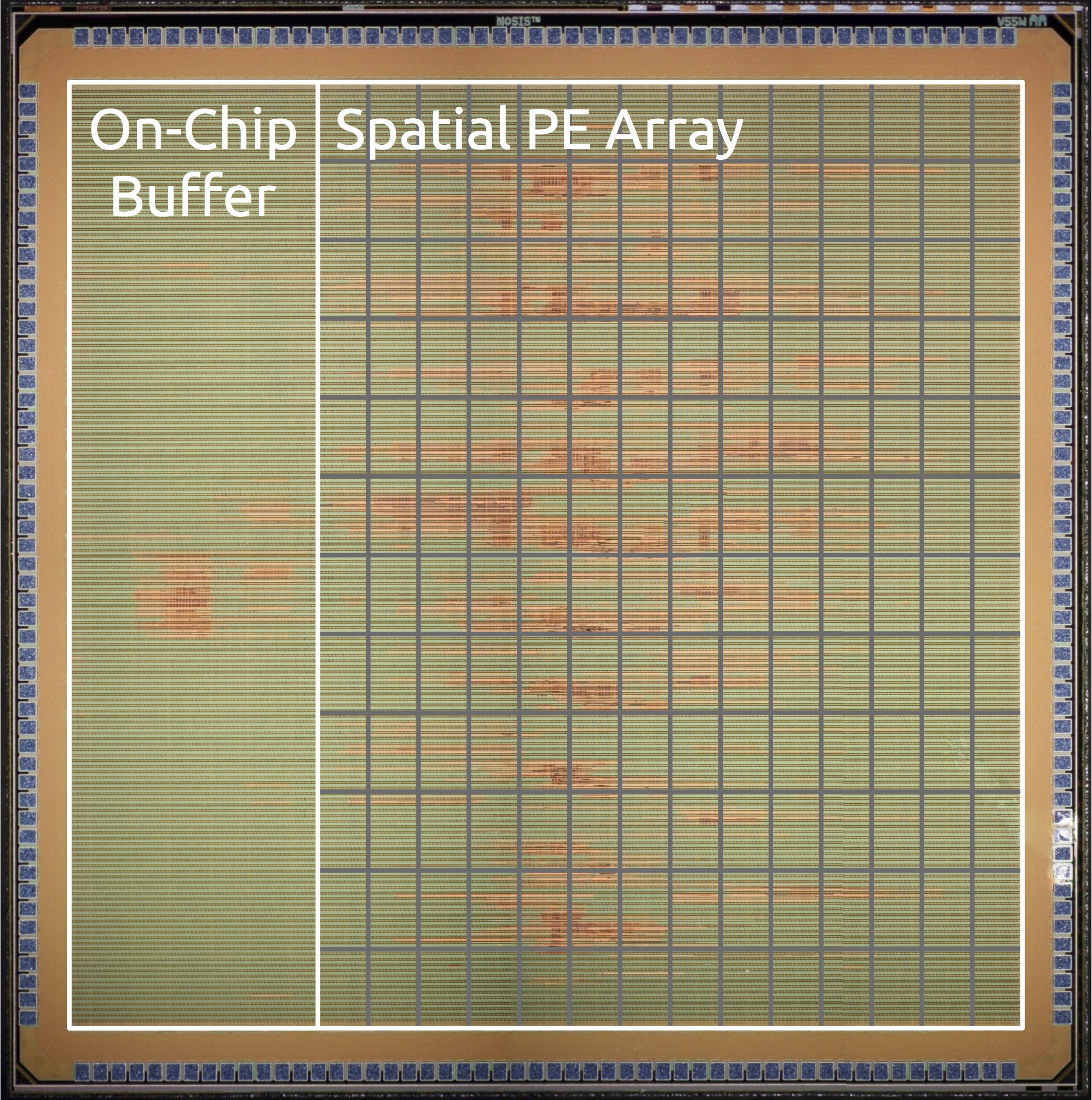

Die Photo

Video

Press Coverage

Downloads

BibTeX

@inproceedings{isscc_2016_chen_eyeriss,

author = {{Chen, Yu-Hsin and Krishna, Tushar and Emer, Joel and Sze, Vivienne}},

title = {{Eyeriss: An Energy-Efficient Reconfigurable Accelerator for Deep Convolutional Neural Networks}},

booktitle = {{IEEE International Solid-State Circuits Conference, ISSCC 2016, Digest of Technical Papers}},

year = {{2016}},

pages = {{262-263}},

}

Related Papers

- T.-J. Yang, V. Sze, "Design Considerations for Efficient Deep Neural Networks on Processing-in-Memory Accelerators," IEEE International Electron Devices Meeting (IEDM), Invited Paper, December 2019. [ paper PDF | slides PDF ]

- Y.-H. Chen, T.-J Yang, J. Emer, V. Sze, "Eyeriss v2: A Flexible Accelerator for Emerging Deep Neural Networks on Mobile Devices," IEEE Journal on Emerging and Selected Topics in Circuits and Systems (JETCAS), Vol. 9, No. 2, pp. 292-308, June 2019. [ paper PDF | earlier version arXiv ]

- D. Wofk*, F. Ma*, T.-J. Yang, S. Karaman, V. Sze, "FastDepth: Fast Monocular Depth Estimation on Embedded Systems," IEEE International Conference on Robotics and Automation (ICRA), May 2019. [ paper PDF | poster PDF | project website LINK | summary video | code github ]

- T.-J. Yang, A. Howard, B. Chen, X. Zhang, A. Go, M. Sandler, V. Sze, H. Adam, "NetAdapt: Platform-Aware Neural Network Adaptation for Mobile Applications," European Conference on Computer Vision (ECCV), September 2018. [ paper arXiv | poster PDF | project website LINK | code github ]

- Y.-H. Chen*, T.-J. Yang*, J. Emer, V. Sze, "Understanding the Limitations of Existing Energy-Efficient Design Approaches for Deep Neural Networks," SysML Conference, February 2018. [ paper PDF | talk video ] Selected for Oral Presentation

- V. Sze, T.-J. Yang, Y.-H. Chen, J. Emer, "Efficient Processing of Deep Neural Networks: A Tutorial and Survey," Proceedings of the IEEE, vol. 105, no. 12, pp. 2295-2329, December 2017. [ paper PDF ]

- T.-J. Yang, Y.-H. Chen, J. Emer, V. Sze, "A Method to Estimate the Energy Consumption of Deep Neural Networks," Asilomar Conference on Signals, Systems and Computers, Invited Paper, October 2017. [ paper PDF | slides PDF ]

- T.-J. Yang, Y.-H. Chen, V. Sze, "Designing Energy-Efficient Convolutional Neural Networks using Energy-Aware Pruning," IEEE Conference on Computer Vision and Pattern Recognition (CVPR), July 2017. [ paper arXiv | poster PDF | DNN energy estimation tool LINK | DNN models LINK ] Highlighted in MIT News

- Y.-H. Chen, J. Emer, V. Sze, "Using Dataflow to Optimize Energy Efficiency of Deep Neural Network Accelerators," IEEE Micro's Top Picks from the Computer Architecture Conferences, May/June 2017. [ PDF ]

- A. Suleiman*, Y.-H. Chen*, J. Emer, V. Sze, "Towards Closing the Energy Gap Between HOG and CNN Features for Embedded Vision," IEEE International Symposium of Circuits and Systems (ISCAS), Invited Paper, May 2017. [ paper PDF | slides PDF | talk video ]

- V. Sze, Y.-H. Chen, J. Emer, A. Suleiman, Z. Zhang, "Hardware for Machine Learning: Challenges and Opportunities," IEEE Custom Integrated Circuits Conference (CICC), Invited Paper, May 2017. [ paper arXiv | slides PDF ] Received Outstanding Invited Paper Award

- Y.-H. Chen, T. Krishna, J. Emer, V. Sze, "Eyeriss: An Energy-Efficient Reconfigurable Accelerator for Deep Convolutional Neural Networks," IEEE Journal of Solid State Circuits (JSSC), ISSCC Special Issue, Vol. 52, No. 1, pp. 127-138, January 2017. [ PDF ]

- Y.-H. Chen, J. Emer, V. Sze, "Eyeriss: A Spatial Architecture for Energy-Efficient Dataflow for Convolutional Neural Networks," International Symposium on Computer Architecture (ISCA), pp. 367-379, June 2016. [ paper PDF | slides PDF ] Selected for IEEE Micro’s Top Picks special issue on "most significant papers in computer architecture based on novelty and long-term impact" from 2016

- Y.-H. Chen, T. Krishna, J. Emer, V. Sze, "Eyeriss: An Energy-Efficient Reconfigurable Accelerator for Deep Convolutional Neural Networks," IEEE International Conference on Solid-State Circuits (ISSCC), pp. 262-264, February 2016. [ paper PDF | slides PDF | poster PDF | demo video | project website ] Highlighted in EETimes and MIT News.

Acknowledgement

This work is funded by the DARPA YFA grant N66001-14-1-4039, MIT Center for Integrated Circuits & Systems, and gifts from Intel and Nvidia.