-

Center of Innovation

At the MIT ORC, our vibrant community of scholars and researchers work collaboratively to connect data to decisions in order to solve problems effectively—and impact the world positively.

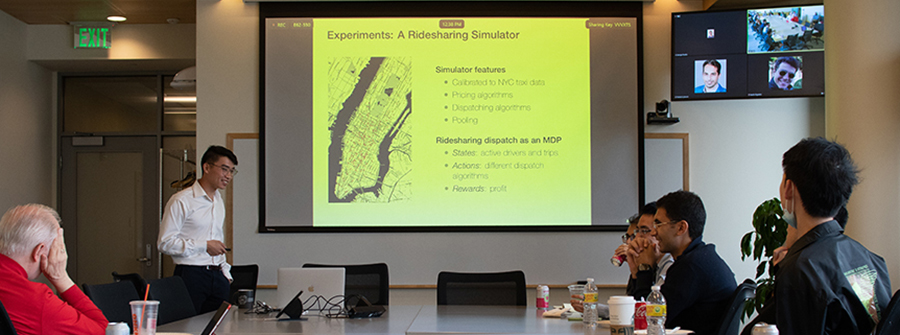

Research

At the MIT ORC, we highly value research and the important role it plays in operations research and analytics. That’s why our students are actively engaged in research from the start.

Read More

Global Impact

Our impact can be felt around the world in areas ranging from finance to education to health care. We create mathematical models to help individuals and organizations make smarter decisions—and to improve society as a whole.

Read More

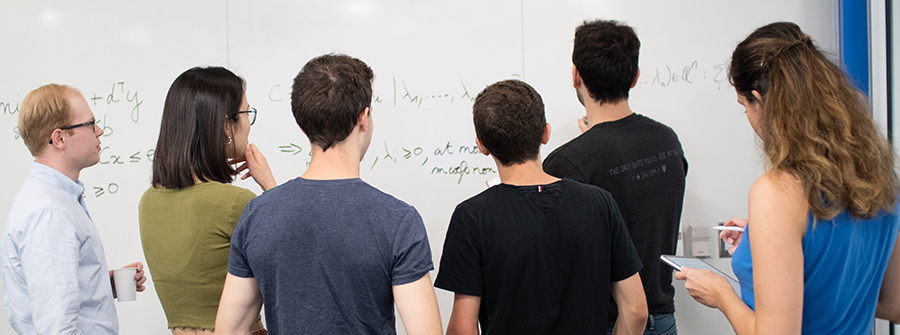

ORC Community

The MIT ORC is made up of a supportive community of creative and talented students and faculty who work together to positively impact the world.

Read More

Analytics

Analytics is the science of using data to build models that lead to decisions that add value to institutions, companies, and individuals.

Andy Sun PhD '11

Embracing the future we need

Professor Andy Sun works to improve the electricity grid so it can better use renewable energy.

Read MoreTo build a better AI helper, start by modeling the irrational behavior of humans

To build AI systems that can collaborate effectively with humans, it helps to have a good model of human behavio

Advancing technology for aquaculture

According to the National Oceanic and Atmospheric Administration, aquaculture in the United States represents a

Drop Date

Last day to cancel subjects from spring term Registration

read more